The paper “Maximum entropy optimal control of continuous-time dynamical systems” has been accepted for publication in the IEEE Transactions on Automatic Control.

Maximum entropy optimal control of continuous-time dynamical systems

by Jeongho Kim, and Insoon Yang

Abstract:

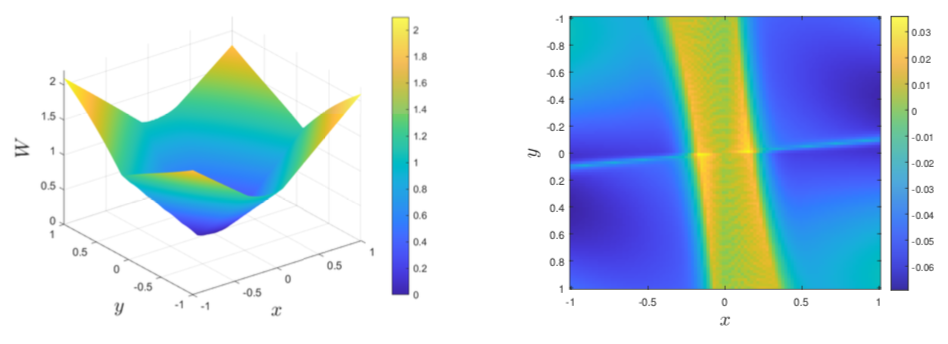

Maximum entropy reinforcement learning methods have been successfully applied to a range of challenging sequential decision-making and control tasks. However, most of the existing techniques are designed for discrete-time systems although there has been a growing interest to handle physical processes evolving in continuous time. As a first step toward their extension to continuous-time systems, this paper aims to study the theory of maximum entropy optimal control in continuous time. Applying the dynamic programming principle, we derive a novel class of Hamilton–Jacobi–Bellman (HJB) equations and prove that the optimal value function of the maximum entropy control problem corresponds to the unique viscosity solution of the HJB equation. We further show that the optimal control is uniquely characterized as Gaussian in the case of control-affine systems and that, for linear-quadratic problems, the HJB equation is reduced to a Riccati equation, which can be used to obtain an explicit expression of the optimal control. The results of our numerical experiments demonstrate the performance of our maximum entropy method in continuous-time optimal control and reinforcement learning problems.

![[TAC] Maximum entropy optimal control in continuous time](http://coregroup.snu.ac.kr/wp-content/uploads/2018/11/Depositphotos_4892867_xl-2015.jpg)